Most analytics platforms are technology that grew a methodology.

A team builds a piece of software. The software does something interesting. Then someone, often in marketing, retrofits a methodology around it that explains what the software does in language that sounds disciplined enough to sell.

The Data Alchemy Framework is the opposite. It is methodology that grew a platform.

The framework existed for almost two decades before any of it was productised. It was built question by question, project by project, in commercial research engagements across financial services, FMCG, retail, and consumer credit. The technology arrived later, when it had finally caught up with what the methodology had always wanted to do.

That is not a marketing claim. It is a structural fact about how Knowsis was built. And it explains why the framework holds together as a coherent thing, while most consulting frameworks do not.

What Twenty Years of Commercial Research Actually Teaches You

Working at the intersection of analytics and strategic consulting for two decades teaches you something the industry rarely says out loud.

Most analytical methods are correct. Almost all of them are useless.

The technology to do nearly anything you want with consumer data has existed for years. You can run a hundred different segmentations on the same dataset and produce a hundred technically defensible answers. You can apply the latest model architecture to your survey data and generate findings that look impressive on a slide. The hard part is not the maths. The hard part is knowing which methods actually move the decisions a business needs to make, and which produce well-received reports that change nothing.

Twenty years of client-facing work is twenty years of building, refining, and selecting between analytical techniques. The Data Alchemy Framework is the menu that emerged from that work. Seven analytical techniques for surfacing insight from data, organised so that the right one for any given problem can be identified quickly and the wrong ones discarded with confidence.

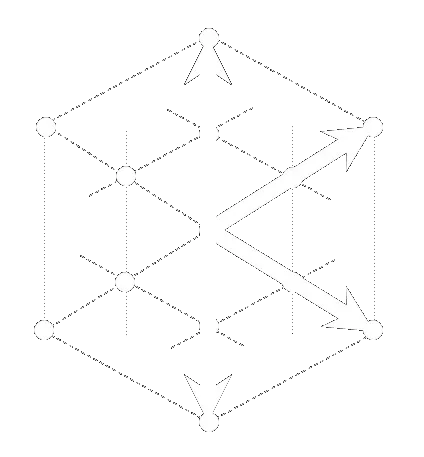

The Seven Pillars

The Data Alchemy Framework consists of seven analytical techniques for surfacing insight from data. They are not a checklist to be walked through. They are a menu to be chosen from.

Most engagements use one or two. Sometimes three. Rarely all seven. The framework's value is not in completeness; it is in precision about which technique fits which problem. Knowing which question you are actually asking is half the work. Knowing which technique answers it cleanly is the other half.

Description. What is actually here? Before deciding what to do with a dataset, profile it honestly. Cross-tabulate. Test for significance. Understand the structure of what you have, without pretending you know yet what it means. This is the first pillar because it is the one most often skipped, and skipping it is the source of more wasted analysis than any other single error.

Distillation. What is the underlying construct? A battery of twenty questions does not necessarily measure twenty things. Often it is measuring three or four things badly, because the construct beneath the items has not been identified. Distillation is the discipline of finding the latent variable and treating it as the unit of analysis, not the original questions.

Segmentation. Who behaves coherently? Demographics describe people. Outcome-based segmentation describes behaviour. The pillar is not the technique. It is the commitment to defining groups by what they actually do, not by who they appear to be on a census form.

Synthetic. When fieldwork is insufficient, what can be safely scaled? Sometimes the sample is too small. Sometimes the population coverage is incomplete. Sometimes the data has missing values that conventional imputation cannot fill. The synthetic pillar is the discipline of scaling honestly, with a documented validation framework that says exactly when the synthetic data deserves to be trusted and when it does not.

Prediction. What is likely to happen, and how confident should we be? Prediction without humility is forecasting theatre. The pillar is the commitment to modelling outcomes rigorously while being honest about what the model can and cannot anticipate, what it is generalising from, and where its limits sit.

Causation. What is actually driving the behaviour? This is the hardest pillar and the one most often misunderstood. Self-reported importance and behavioural impact are not the same thing. Customers will tell you, sincerely, what matters to them. Their behaviour will tell you something else. Causation is the discipline of separating the two and only acting on the second.

Synergy. Does the analysis hold together as a coherent workflow? An analytical engagement is not a sequence of disconnected studies. The output of one stage should inform the next. Description should shape Distillation. Distillation should feed Segmentation. Each pillar is more powerful when chained into a workflow than when run in isolation. Synergy is the pillar that makes the others count for more than the sum of their parts.

These are analytical techniques, organised by the kind of question they answer. The skill is not knowing all seven equally. The skill is knowing which to reach for when, and choosing the right one for the problem in front of you. Twenty years of commercial work taught me that most engagements need one or two of these techniques, deployed precisely. Almost none need all seven.

The Pareto Principle, Productised

The Data Alchemy Platform exists because of a pattern I noticed in my own work.

Across twenty years of client engagements, I was reaching for roughly twenty percent of my techniques eighty percent of the time. The Pareto distribution is real, and it was real in my own analytical practice. A small subset of the framework's techniques was doing most of the commercial work, validated by repeated demand and repeated outcomes. The other techniques mattered too, but they came out of the toolkit only when the engagement specifically called for them.

Those high-frequency, high-ROI techniques became the Data Alchemy Platform. Description, Distillation, Catalyst as the productised form of Causation, Segmentation, and Synthetic operate as live apps in the browser. The Alchemist Agent is in development as the conversational layer that orchestrates them.

The framework is broader than the Platform. It includes the techniques that operate as consulting capabilities for problems where the Pareto-bestsellers are not the right fit. It includes the methodological discipline behind every engagement that does not happen on the Platform at all. The framework is the full menu. The Platform is the menu's bestsellers, productised because the demand was there and the ROI was demonstrable.

That is what makes the difference, commercially. Most analytics products are tools that grew a methodology after the fact. The Data Alchemy Platform is the productised form of the techniques that twenty years of work proved most commercially viable. The framework came first. The Pareto Principle decided what got built.

Why This Matters Now

The research industry is watching a wave of new platforms launch every quarter, each promising to do something the others cannot, most powered by the same underlying technology and the same general claim that AI will transform research.

The technology is real. The transformation is genuine. But in a market where every competitor has access to roughly the same models, what differentiates a platform is not what it can do. It is whether the methodology behind it has earned the right to do those things.

Methodology that grew out of a sales narrative will not survive contact with a complex client problem. Methodology that grew out of twenty years of commercial work, with the productised tools selected by ROI rather than feature backlog, will. The framework is not a marketing claim. It is the accumulated discipline of two decades of client engagement, expressed as a coherent set of seven analytical techniques. The Platform productises the subset that demand and ROI selected from that work.

The platforms launching now will compete on features. The frameworks underneath them will compete on whether they actually help anyone make better decisions.

The Trilogy Becomes a Quartet

I have written elsewhere about where the philosophical foundations of Knowsis came from.

My Honours research into rock climbing motivation gave me the psychological lens that runs through Track 2, the intelligence products. My Master's research into evaluating wilderness therapy gave me the methodological discipline that runs through Track 1, the question of how to know whether anything has actually changed.

Twenty years of commercial research gave me the third foundation. Not the philosophy or the discipline, but the analytical techniques themselves and the experience to know which ones earned commercial keep. The Honours taught me what to study. The Master's taught me how to know if it worked. The commercial work taught me which techniques returned ROI consistently, and which did not.

The Data Alchemy Framework is where those three foundations meet: seven techniques refined across two decades. The Platform is the Pareto-subset that demand and ROI promoted into productised form.

Methodology before technology. The discipline first. The tools after.

That is the order things were built in, and it is the order they will keep working in.