Most segmentation projects take weeks. You collect the data, write a brief for an analyst or agency, wait for the model to be built, review the output, request revisions, and eventually get segments that may or may not actually change how you talk to your customers. I've run hundreds of these projects over 20 years, and the analytical work itself, the actual clustering and profiling, takes hours. Everything else is logistics.

The bottleneck isn't the mathematics. It's the handoffs.

Data preparation, methodology selection, iteration cycles. Each step involves a different person or team, each with their own queue. Meanwhile, your stakeholders are waiting. The insights you collected three months ago start losing commercial relevance. The timing you needed to act on is gone.

But here's what I've learned: on modern hardware, the analysis itself runs in minutes. You upload clean survey data, set a few parameters, and a clustering algorithm profiles the respondents in less time than it takes to make a coffee. The hard part has never been the maths. The hard part has been the layers of process sitting on top of the maths.

Self-service segmentation solves this. Not by making the analysis simpler, but by removing the people and handoffs that slow it down.

Why Traditional Segmentation Falls Apart in Strategy

Before we talk about running segmentation yourself, we need to be honest about why most segmentation studies fail to deliver what they promise.

The traditional approach is seductive in its simplicity. You collect survey data, run a clustering algorithm on the raw responses, build personas, and move on. It looks tidy on a slide. But there is a fundamental problem: people are poor judges of what drives their own decisions. Rating scales are plagued by bias, compression, and social desirability. When you cluster on those raw inputs, you are grouping people by how they filled in a questionnaire, not by how they actually behave.

The result? Segments that look clean in a presentation but fall apart the moment you try to act on them. Customers who "look alike" in the data often think differently, feel differently, and act differently. Two consumers who are demographically identical, who give you identical scores on your brand perception questions, can be driven by completely different motivations. One engages because of status. Another because of genuine product belief. Another because of habit. A fourth because the price is right. A segmentation based on raw survey responses would lump all four together and call the problem solved.

What Outcomes-Based Segmentation Actually Means

At Knowsis, we approach segmentation differently. Rather than clustering people by what they say, we segment based on what actually influences their behaviour, revealed through advanced modelling of real outcomes like NPS, trial, switching, or advocacy.

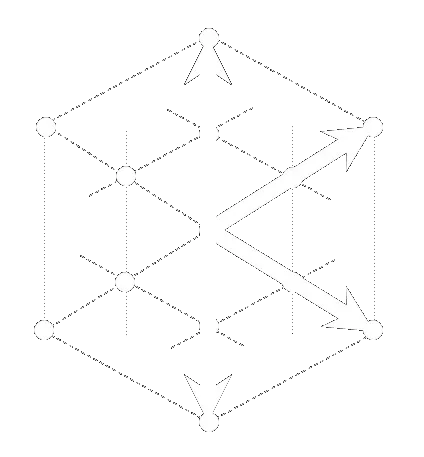

The methodology follows three distinct steps that traditional segmentation never takes.

First, model the outcome. We build a machine learning model that predicts a chosen behavioural outcome using your survey and experience data. This step isolates what actually moves the needle, rather than treating every variable equally. It is the analytical equivalent of asking "what actually matters?" before deciding how to group people.

Second, infer individual driver profiles. For each respondent, we generate a unique influence profile: a behavioural fingerprint showing the relative weight of each driver for that specific individual. This is where the approach fundamentally diverges from tradition. Most segmentation treats respondents as data points on a scatter plot. We treat each one as a person with a unique pattern of motivations. Some are driven primarily by price sensitivity. Others by emotional connection. Others by social influence. The fingerprint captures this individual variation.

Third, cluster by motivation patterns. Rather than clustering people by how they answered the survey, we cluster them by the shape of what influences them. The result is segments defined by shared drivers, not just shared demographics or shared attitudes.

This distinction matters enormously in practice. Look at a financial services example. A European credit management company worked with us on a dataset covering 20,000 consumers across 20 countries. Conventional demographic analysis suggested the obvious: segment by income. But when we modelled actual financial behaviour and generated individual driver profiles, we discovered that 21 per cent of average earners showed patterns of severe financial vulnerability. Their income was stable, but their psychological relationship with money, shaped by childhood experiences and social pressure, was driving behaviour that no income bracket could predict. That insight required a completely different engagement strategy. It was the difference between a marketing message and a commercial intervention.

The Five-Step Workflow

With the Data Alchemy Platform, running outcomes-based segmentation is straightforward. Here's how it actually works in practice.

Step 1: Upload Your Data. Start with your survey dataset as a CSV or XLSX file. The platform accepts any standard survey export. You don't need to clean it or restructure it. Upload and move on.

Step 2: Run Description. Before segmentation, the platform runs a data profiling check. It identifies variable types, flags data quality issues, checks for missing values, and gives you a summary of what you're working with. This step protects you from garbage-in-garbage-out. If there are serious quality problems, you'll know before you invest time in segmentation.

Step 3: Run Segmentation. This is where the proprietary methodology described above comes to life. The platform first models your chosen behavioural outcome, isolating which variables actually predict behaviour rather than treating every survey question equally. It then generates individual driver profiles for each respondent, capturing the unique pattern of influences that shape their decisions. Finally, it clusters respondents by the shape of those influence patterns, not by raw survey responses. The result is segments defined by shared motivations, not shared demographics. All of this runs in minutes. You don't make methodology decisions or write code. The platform handles the analytical complexity while you focus on interpretation.

Step 4: Run Catalyst to Understand What Drives Each Segment. Segmentation tells you who your audiences are and what motivational patterns they share. Catalyst goes deeper, quantifying the gap between stated importance and real behavioural impact for each segment. A respondent might tell you that price is important. But their behavioural fingerprint might reveal they are actually driven by social validation or habit. Catalyst separates these layers using the same modelling approach that powers the segmentation itself: analysing what actually predicts behaviour, not what people claim on a rating scale. This is where strategy gets specific. You move from "these are your segments" to "here is exactly what will shift each one."

Step 5: Export and Act. The platform delivers three things: segment assignments appended to your original dataset (so you can cross-tab and dig deeper), a client-ready presentation deck that explains the segments and their drivers, and a methodology note for your files. Everything you need to brief stakeholders and move into implementation.

For a typical survey of 500 to 5,000 respondents, this entire workflow takes under an hour. The longest part is usually the Catalyst analysis. The segmentation itself runs in minutes.

What Good Segments Actually Look Like

Not all segments are created equal. You can run a clustering algorithm and get groups that are statistically sound but strategically useless. Here's what you're actually looking for.

Behaviourally Distinct. The segments should differ in ways that matter to how you engage with them. If your segments differ only on demographics, they're not behaviourally distinct. They're just fancy demographics. Outcomes-based segmentation ensures each group shows a genuinely different pattern of behaviour or motivation.

Internally Consistent. Respondents within a segment should share more in common with each other than with people in other segments. This sounds obvious, but it's where a lot of segmentation falls apart. You get three big groups and two tiny outlier groups that throw off your insight and make implementation a nightmare.

Commercially Actionable. You need to be able to act on the insight. If a segment exists only in statistical space but you can't identify what makes them different in the real world, you can't do anything with it. Your personas should have clear behavioural or attitudinal signatures that your marketing, product, or service teams can use.

Stable Over Time. A segmentation that dissolves in six months is a segmentation that doesn't work. Good segments reflect underlying human motivations that persist. The financial vulnerability pattern we found in that lender's data didn't go away in quarter two. It was structural, not transient.

The financial services case is a good example of all four. The four personas were behaviourally distinct (different spending patterns, different risk profiles), internally consistent (the financial vulnerable group was tightly clustered, not scattered), commercially actionable (the lender could design a specific product and engagement approach for each), and stable (the patterns held across multiple waves of data).

When Self-Service Segmentation Isn't Enough

The Data Alchemy Platform handles the majority of segmentation projects. But there are scenarios where you need deeper, more bespoke work.

If you need to integrate multiple data sources (survey data plus transaction data plus digital behaviour), you're beyond self-service. The platform handles single datasets well. If your segmentation needs to feed into a complex predictive model (credit scoring, churn prediction), you might want a data scientist in the room to make sure the integration is clean. And if the commercial stakes are high enough that getting the segmentation wrong would be expensive, that's a moment for human expertise and validation.

But for the standard case, "I collected this survey data and I need to understand who my audience is and what moves them," the platform is built for you to do the work yourself.

When you need deeper work, Knowsis consulting is available. We integrate the platform with human analysis, bringing decades of experience to interpretation and strategy. But most insights leaders and marketing strategists will find that self-service segmentation gives them exactly what they need, exactly when they need it.