For twenty years, advanced analytics in market research has followed the same pattern. You collect survey data, load it into a system, then hand it to a specialist who runs the analysis. Days pass. Weeks pass. Then the results arrive, presented in a PowerPoint deck you can barely interrogate. The value of that analysis is real but the bottleneck is structural. And every insights team I've worked with has accepted it as inevitable.

It's not inevitable anymore. The same transformation that happened in design, accounting, and web development is now happening in research analytics. Complex work is becoming accessible. Not simplified or dumbed down. Made accessible. The sophisticated analytical techniques are still there. The interface changed.

The Outsourcing Tax

Most insights teams don't have an internal data scientist. Not because they don't want one. Because they can't afford one. So when you need segmentation, driver analysis, or outcome-based clustering, you hand the data to an agency, a specialist consultant, or your vendor's analytics team. This arrangement costs you three things: money, time, and ownership.

The money cost is obvious. External analytics is expensive. You're paying someone else's salary plus margin plus overhead. If you run segmentation four times a year, that's a real line item on the research budget.

The time cost is less visible but more painful. You submit the data. You wait for them to create their analysis plan. You wait for them to run the analysis. You wait for them to build the output. Two weeks later you have your results. But the competitive moment has passed. The board meeting is next week. By the time you have an answer, the question is stale.

The ownership cost is the most underrated. The methodology for the segmentation lives in someone else's head. They know why certain variables cluster that way. They know what would change if you adjusted a parameter. You don't. If that person leaves the agency, you've lost the logic. If you want to validate the clustering with real-world behavioural outcomes, you can't. You don't own the process.

Most insights teams accept these costs because the alternative, building an internal data science capability, seems more expensive still. Good data scientists cost 150 to 200 thousand dollars a year. You'd need at least two of them to have coverage. Add benefits, equipment, training budget, and you're looking at half a million dollars a year for a capability that gets used maybe thirty percent of the time. The math doesn't work for most teams.

What Actually Changed

Canva didn't invent graphic design. Xero didn't invent accounting. Squarespace didn't invent web development. What they did was take complex work and build software that made it accessible to people who weren't trained in that field.

The analytical techniques themselves didn't change. Segmentation still works the way it worked in 1995. Driver analysis still separates importance from impact the same way. What changed is the interface. Instead of needing someone who understands how to build a clustering algorithm from scratch, the software handles the execution. Your job shifts from running the analysis to understanding the results.

This has happened in research before. Qualtrics didn't invent survey analytics. They took a field that required statistical training and built an interface that let market researchers do their own cross-tabs and banner tables. If that's possible for basic analysis, why not for advanced analytics?

The honest answer is that it's harder. Segmentation is genuinely complex. But hard doesn't mean impossible. It means you need software built with researchers in mind, not data scientists.

What Self-Service Analytics Actually Looks Like

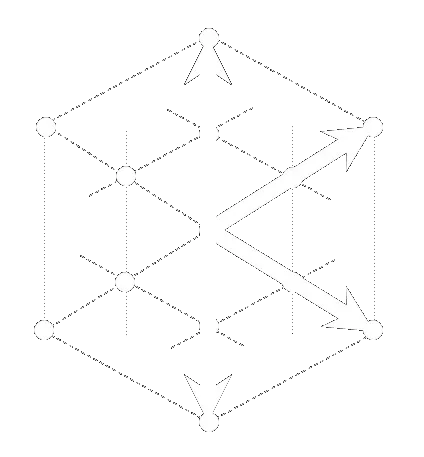

The Data Alchemy Platform is built around a specific workflow. Upload your data. Describe it. Cluster it. Analyse it. Validate it. Scale it. Each step is handled by a purpose-built application designed to work the way researchers think, not the way mathematicians think.

You upload your survey data. The Description application profiles it automatically, flagging data quality issues and variable relationships. No manual data cleaning. No documentation wrestling. The system understands your data.

From there, you move to segmentation. The Segmentation application clusters your data against real-world behavioural outcomes. You define what you're trying to predict. The system groups respondents by shared patterns that drive those outcomes. This isn't demographic bucketing. This is outcome-driven segmentation. The segments predict behaviour because they're built from behaviour.

Catalyst separates importance from impact. Which variables actually move the needle? Which ones just correlate? This is where most research analytics stops. You identify drivers and call it a day. But correlation isn't causation and importance isn't impact. Catalyst splits the difference. You see both.

Synthetic Projection scales your findings. You calibrated your segmentation against real people. Now you want to understand how those segments would scale across your addressable market. Projection takes your calibration data and extrapolates it cleanly across a larger population scaffold, preserving the relationships you identified.

PRISM validates everything. Before any of these results reach your dashboard, they're audited across five dimensions of quality. Precision, Richness, Integrity, Strength, and Modelability. You see exactly where the analysis is rock solid and where it's weaker. No black box. Full transparency.

All of this happens in a browser. No code. No command line. No statistical background required. The time from data upload to validated results is measured in minutes, not months.

What Self-Service Analytics Doesn't Replace

This is where I need to be honest about the limits. Self-service analytics doesn't replace strategic thinking. It doesn't replace research design. It doesn't replace the 20 years of experience that tells you which analysis to run in the first place.

What it does replace is the execution bottleneck. Your team doesn't stop thinking strategically because they're waiting for someone else to run the numbers. They don't defer the decision until next quarter because the analytics turnaround is too slow. They run the analysis themselves. They interrogate the results immediately. They iterate. They validate with real-world behavioural outcomes. They move faster.

The question isn't whether your team can run a segmentation. Your team can learn that. The question is why they're still sending data to someone else to run it for them when the tools exist to do it in house.

The Real Question

For twenty years, the answer to that question was "because the tools are too complicated." Building segmentation is complex. Building driver analysis is complex. Building synthetic projection is complex. These problems required specialists.

That answer has an expiry date. The technology exists to make these problems accessible. The software exists. The question isn't "can we do this in house?" It's "why aren't we?"

If the answer is "because the tools are too complex," you're looking at a problem that's solvable. Not with hiring. With better software.