Seventy-one percent of market researchers expect synthetic data to constitute the majority of consumer data collection within three years. That statistic, from a recent Qualtrics study, tells you everything about the direction the industry is heading. It tells you rather less about whether that direction is safe.

The excitement is understandable. Synthetic data is fast, scalable, and cheap. You can generate thousands of virtual respondents overnight. You can simulate market reactions before a single real person has seen your product. The possibility of compressing weeks of fieldwork into hours is genuinely compelling, and at Knowsis, we have embraced it as exactly that kind of accelerant.

But there is a question that the industry's enthusiasm has been remarkably reluctant to answer: how do you know if synthetic data is any good?

Not good in the sense of statistically interesting. Good in the sense of actually reliable. Good in the sense that the patterns it shows you reflect something real, something that would hold up when a real consumer faces a real decision.

That question is what PRISM was built to answer.

The Trust Paradox

In 2025, I published a piece asking why the research industry embraces weighting without question but treats synthetic data with scepticism. It is a fair question. Weighting is, after all, a form of data manipulation. We take real responses and mathematically adjust them to better represent the population. We accept that adjustment because it is governed by a published, auditable methodology. We know what weighting does, how it does it, and what conditions it requires to be valid.

Synthetic data has no equivalent standard. Vendors publish accuracy claims, sometimes dramatic ones like "12x accuracy", without explaining what that accuracy is measured against, what methodology produced it, or what conditions would cause it to fail. You are asked to trust the output without being shown the working.

This is not a small problem. It is the central credibility challenge for synthetic data adoption.

When I started building what would become the Synthetic Data Engine in the Data Alchemy Platform, I knew that publishing a framework was not optional. It was the price of credibility. The first step in earning the right to make claims about synthetic data quality was to define, openly and precisely, what quality means.

What Synthetic Projection Is Not

Before explaining PRISM, it is worth being clear about what synthetic projection is designed to do, and what it is not.

Synthetic projection is not a replacement for human-generated survey data. It does not remove sample bias. It does not eliminate error or variance. And it does not magically transform a weak sample into ground truth.

At Knowsis, we see synthetic data as a way of amplifying human insight, not replacing it. The calibration survey, the real data you collected from real people, is the foundation. Synthetic projection learns the behavioural relationships in that data and projects them onto a larger, representative population scaffold. The goal is not to invent behaviour. It is to avoid losing the behaviour you already measured when you scale it up.

Think of it the way my son explained de-extinction to me on a drive to jiu-jitsu one morning. Scientists working to bring back the dodo start with partial DNA sequences, incomplete but rich with distinctive traits. They use a living relative's genome as a scaffold to fill in the gaps, and generative tools to bridge the two. What you end up with is not a static replica but something that carries the same behavioural patterns as the original. The synthetic data works the same way: the calibration survey is the partial DNA, the population dataset is the scaffold, and the generative model is the bridge. When it works, the result behaves like real human data.

When it works. That qualifier is doing a lot of work. And PRISM is what tells you whether it worked.

The Five Dimensions of PRISM

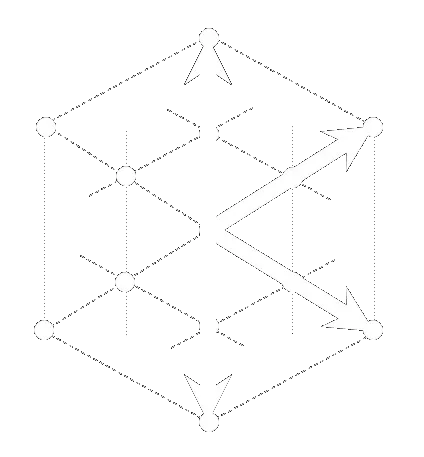

PRISM stands for Precision, Richness, Integrity, Strength, and Modelability. Each dimension addresses a distinct aspect of synthetic data quality. Each is independently scored from 0 to 100, and the composite PRISM Quality Score is a weighted average across all five.

Precision measures how closely the synthetic variable distributions match the original source data. This is the most intuitive check. If your calibration survey shows 34% of respondents in a particular attitudinal segment, does the synthetic projection preserve that proportion? Precision goes beyond simple distribution matching to check subgroup alignment, correlation preservation, and statistical divergence across variables.

Richness measures whether the synthetic data preserves the diversity and range of the original. A high Richness score means the projection captures the full spread of responses, not a compressed or smoothed version. This matters because compression is one of the most common failure modes in synthetic projection. The model learns central tendencies well but loses the edges, and the edges are often where the most strategically interesting consumers live.

Integrity checks whether the relationships between variables are maintained. Synthetic data with high Integrity is internally coherent. The logical patterns that exist in the real data are preserved in the projection. A respondent who scores high on brand affinity in the calibration survey should still be associated with the attitudinal patterns that explain that affinity in the synthetic output. If those relationships break down, the synthetic data may look statistically plausible while being analytically useless.

Strength measures the statistical confidence and analytical utility of the synthetic data. It is possible to produce synthetic data that is distributionally accurate but analytically weak, data that passes surface checks but produces unreliable results when used for modelling or segmentation. Strength catches this by testing record utility, effective sample size, and predictive equivalence.

Modelability is the most practically important dimension. It tests whether the synthetic data would produce similar analytical results to the original data if used for modelling, driver analysis, or segmentation. This is the question that matters most to the analyst who will actually use the data: not whether it looks right, but whether it behaves right.

Each PRISM dimension has weighted sub-metrics. The full methodology is published on the Data Alchemy Platform and available for independent review.

What the Scores Mean

PRISM scores above 80 indicate excellent quality. The synthetic data is suitable for full analytical use with high confidence. Scores between 60 and 80 indicate good quality, suitable for most analytical applications. Scores below 60 require parameter adjustment, additional source data, or a reassessment of whether synthetic projection is appropriate for this dataset.

Crucially, PRISM scores are generated automatically for every Projection and Imputation run. Quality assurance is not a separate step. It is built into the workflow. Every synthetic dataset that comes out of the Data Alchemy Platform arrives with a full PRISM Quality Report, covering the composite score, all five dimension scores, and the sub-metric breakdown.

This is what "validated synthetic data" means in practice. Not a headline accuracy figure. Not a vendor's assurance. A five-dimension audit trail that tells you exactly where the synthetic data is strong, where it is weaker, and whether you can trust the analysis that follows.

Why This Matters for the Industry

The synthetic data market is consolidating fast. Vendors are emerging with bold claims and minimal methodology. The pressure to adopt is real. Seventy-one percent of researchers expect it to dominate within three years, and no-one wants to be the last firm still running six-week fieldwork projects when the rest of the industry has moved on.

But the absence of published validation standards is a genuine risk. Not just to individual research projects, but to the credibility of synthetic data as an approach. Every high-profile failure, every synthetic dataset that leads a client to a wrong decision, makes the whole category harder to sell. The industry needs a standard that separates governed synthetic data from black-box projection.

PRISM is Knowsis's contribution to that standard. We publish the methodology not because we are required to, but because we believe that transparency is the only durable basis for trust. Clients who use the Data Alchemy Platform are not asked to trust our accuracy claims. They are given the tools to evaluate those claims themselves.

That is the principle behind PRISM: claimed accuracy without published methodology is not validation. Validation means showing your working.

A Note on Imputation

PRISM governs both modes of the Synthetic Data Engine, Projection and Imputation, both of which are available now as self-service applications on the Data Alchemy Platform.

Projection scales a small, high-quality calibration survey to a larger population-level dataset. Imputation fills missing values in an existing dataset, including missing survey responses, unfilled quotas, and gaps in panel data. Both modes carry the same quality risks, and both are governed by the same PRISM framework. Every Imputation run produces a full PRISM Quality Report, with the same five dimensions scored against the specific characteristics of an imputed dataset.