There is a moment in almost every research project that I have come to think of as the translation problem.

You have the data. You have run the analysis. You understand what it means. And then you spend the next two weeks turning that understanding into something a client can act on, wrestling with PowerPoint, chasing sign-offs, formatting charts, writing the same executive summary you have written a hundred times before.

The insight existed the moment the analysis finished. Everything after that was administration.

I have been in market research for over twenty years. I have watched the analytical side of the discipline transform almost beyond recognition. But the last mile, from finished analysis to boardroom-ready output, has barely changed. It is still largely a manual, time-consuming, human-bottlenecked process.

The Alchemist Agent was built to close that gap.

Two Evolutions, One Unsolved Problem

The market research industry has been through two parallel transformations over the past twenty years, and they are worth understanding separately.

The first was in data collection. When I started my career, surveys were conducted face to face. Fieldwork took weeks. Data was captured manually, processed in batches, and delivered as printed cross-tabulation tables. I remember receiving those printouts and hand-copying the numbers I needed into PowerPoint, cell by cell. Then came telephone interviewing, which compressed timelines. Then online panels, which compressed them further. Then always-on digital measurement, which made certain types of data collection effectively instantaneous. The industry got dramatically faster at gathering data.

The second transformation was in output delivery. Printed tables gave way to Excel files, which meant you could copy and paste rather than transcribe by hand. Excel gave way to dashboards, which meant the numbers were prepopulated directly from the data source. No manual copying at all. Dashboards got more sophisticated. They got more visual. They got more accessible to non-technical stakeholders.

But here is what neither transformation solved: interpretation.

A dashboard gives you numbers. It does not tell you what those numbers mean. It does not tell you which of the fifteen metrics you are looking at actually matters for the decision you need to make. It does not tell you that the satisfaction score declining in the 35 to 44 age group is driven by a single experience driver that your operations team could fix in a quarter. It shows you the signal. It leaves the meaning entirely to you.

This is why, despite twenty years of genuine progress in how fast we collect data and how cleanly we present it, the interpretation bottleneck has remained stubbornly in place. A skilled analyst still has to sit with the numbers, build the story, and translate it into something a decision-maker can act on. That work still takes days. It still gets compressed and simplified at every handoff. And insight still regularly dies in the gap between what the data shows and what eventually reaches the boardroom.

My own experience of this ran parallel to the industry's. I moved from Excel and pivot tables through R and Python to machine learning, gaining more analytical power with each step but always running up against the same constraint: the analysis could be done faster and more rigorously, but the work of turning it into strategic communication remained largely manual.

The Alchemist Agent was built to solve that specific problem. Not to make data collection faster, not to make dashboards prettier, but to close the interpretation gap that two decades of industry evolution left wide open.

The Specialist Barrier

There is a third constraint that the industry has never adequately solved, and it sits between the data and the dashboard.

Most research projects live and die on cross-tabulations and frequencies. These are the workhorses of the industry, reliable, accessible, and understood by everyone from the junior executive to the client. Nothing wrong with them. They remain the foundation of most research deliverables and will continue to be.

But experienced researchers know that the real analytical depth, the insight that turns a competent deck into an outstanding one, comes from the advanced methods: driver analysis that distinguishes what matters from what merely correlates, dimensionality reduction that finds the underlying structure in a twenty-variable battery, outcome-based segmentation that reveals behavioural clusters invisible to demographic cuts. These approaches do not replace cross-tabs. They sit alongside them, adding a layer of strategic depth that cross-tabs cannot provide.

The problem has always been access.

Running a proper driver analysis required briefing a data scientist. That briefing took time. The data scientist had the technical skill but not the project context, not the client history, not the understanding of why certain variables mattered more than others in this particular study for this particular client. You would get results back in an Excel file, sometimes days later, and then spend further time translating them into something presentable. The data scientist would not be producing your slides. You would be doing that yourself, working from outputs you sometimes only partially understood, without the narrative thread that connects the analysis to the client's actual question.

This is the specialist barrier. Advanced analysis has been technically available for decades. But the friction of accessing it, briefing it, waiting for it, and then translating it, meant that most research projects never went there. The cross-tab deck went out. The advanced analysis stayed in the backlog.

The Alchemist Agent removes that barrier entirely.

It does not deliver your full deck. That remains yours. But it delivers the analytical layer that your deck has always been missing. Distillation finds the structure in your survey battery and creates the KPI index that your cross-tabs have been circling around. Catalyst tells you not just what drives satisfaction but which drivers would actually move the needle if you invested in them. Segmentation reveals the behavioural clusters that your demographic cuts were masking. The output arrives as a formatted analytical section, ready to be incorporated into your existing presentation.

The research executive stays in control. The project context stays where it belongs, with the person who has it. And the advanced analysis that previously required a separate specialist engagement, a separate briefing, a separate wait, and a separate translation effort, is available in the time it takes to upload a file.

That value is not limited to traditional research agencies. DIY researchers inside client businesses, running their own surveys on platforms like Qualtrics or SurveyMonkey, face exactly the same barrier. They have the data. They have the business context. But the advanced analytical layer has always been out of reach without a data science resource they rarely have access to. The Alchemist Agent gives them that layer directly.

The same applies to small agencies and freelance researchers. The ability to deliver driver analysis, dimensionality reduction, and behavioural segmentation at the standard of a large agency with a dedicated data science team has historically been a competitive disadvantage for smaller practices. That gap closes when the analytical capability is accessible to anyone with a dataset and a research question.

That is the value proposition. Not a replacement for the cross-tab deck. The analytical depth that turns it from average to outstanding, accessible to any researcher regardless of the size of their team or the tools they already use.

What the Agent Actually Does

The Alchemist Agent is not a chatbot that suggests analyses for you to run. It executes them.

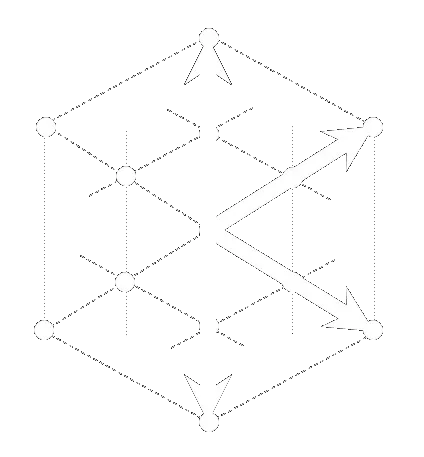

You describe your research question in plain language. The Agent interprets your intent, plans the analytical workflow, selects the appropriate engines from the Data Alchemy Platform, sequences them optimally, executes the full pipeline, and generates a branded strategic report.

A typical workflow might look like this. You upload a survey dataset and ask: "What drives customer satisfaction, and which customer segments respond differently to our top drivers?" The Agent runs Distillation to identify the underlying structure of your satisfaction measures, then Catalyst to quantify the importance and impact of each driver, then Segmentation to identify behavioural clusters in your data, then the Persona Engine to bring those segments to life as strategic archetypes. Each engine feeds the next. The output of Distillation informs the Catalyst analysis. The Catalyst findings shape the Segmentation. The Segmentation results structure the Personas. A workflow that would take an experienced analyst several days to run manually, with multiple handoffs between tools and significant time spent preparing inputs for each stage, completes autonomously.

A Note on Speed: What Is Actually Fast

I want to be precise about this, because precision matters for credibility.

The analytical computation, the machine learning pipelines that power driver analysis, dimensionality reduction, and behavioural segmentation, runs in seconds. A Catalyst driver analysis completes in approximately five seconds. A chained multi-engine workflow, Distillation feeding into Catalyst feeding into Segmentation, runs in approximately twelve seconds. These are verified benchmarks. For context, the same analytical work performed manually by a skilled analyst, including data preparation, model specification, execution, and output formatting, typically takes between one and three days.

The full end-to-end user experience, from CSV upload through analysis to a branded PowerPoint report ready for download, completes in minutes. That includes data ingestion, the analytical pipeline, narrative generation, and report formatting. It is not instant. But it is transformative relative to the days-to-weeks timeline that is still the industry norm.

I make this distinction because overclaiming is its own form of credibility damage. "Instant results" invites scepticism. "Analysis in seconds, reports in minutes, versus the days-to-weeks industry standard" is both accurate and more impressive, because it is specific and defensible.

Four Engines, One Orchestrator

The Alchemist Agent currently orchestrates four engines from the Data Alchemy Platform:

Description handles exploratory data profiling and survey structure analysis. It is typically the first engine in any workflow, providing the foundation that subsequent analyses build on.

Distillation performs dimensionality reduction and KPI index creation. When you have a battery of twenty survey questions that are all measuring variants of the same underlying construct, Distillation finds the underlying structure and creates a clean index. This is the engine that solves the dimensionality problem in survey research.

Catalyst is the driver analysis engine. It identifies the Importance and Impact of each driver on an outcome variable, distinguishing between factors that matter statistically and factors that would actually move the needle if you invested in them. That Importance versus Impact distinction is where the strategic value lives.

Segmentation performs outcome-based behavioural clustering. It identifies segments defined by what people do and why, rather than who they are demographically. The segments it creates are designed to be analytically meaningful and strategically actionable.

Synthetic Projection and Imputation are both available now as self-service applications on the Data Alchemy Platform, ready to use independently. Integration with the Alchemist Agent, enabling them to feed directly into chained analytical workflows, is in development.

Three Ways to Access

The Alchemist Agent is being developed across three access tiers, all of which are in active development.

Chat is the conversational interface, accessible through the Data Alchemy Platform. You describe your research question, the Agent plans and executes the workflow, and you download the output. No technical configuration required.

Headless API provides programmatic access for technical teams who want to integrate the Agent into their own workflows, dashboards, or applications. The API streams results, authenticated with API keys.

Agent-to-Agent is the most advanced tier, enabling machine-to-machine integration within larger AI orchestration frameworks. The Alchemist Agent becomes a callable analytical service within a broader autonomous research pipeline.

All three tiers are launching soon. If you want to be notified when access opens, book a discovery call and we will make sure you are first to know.

What This Means for Research Teams

The Alchemist Agent does not replace analytical expertise. It removes the administrative overhead that consumes analytical expertise.

A skilled researcher using the Alchemist Agent can run the same analysis they would have run manually, but without the days of data preparation, model setup, output formatting, and slide production. That time goes back to what a skilled researcher should actually be doing: understanding the client's problem deeply enough to know which analysis to run, interpreting the output well enough to know what it means, and communicating the implications clearly enough that something actually changes as a result.

The research industry has always had a translation problem. Insight created and insight acted upon are not the same thing, and the gap between them is where research value goes to die. The Alchemist Agent narrows that gap by getting from data to analytical output in seconds and to a formatted strategic section in minutes.

The insight still requires human judgment to be meaningful. The project context, the client relationship, the strategic framing, those remain entirely yours. But the analytical infrastructure that delivers the depth behind your recommendations no longer requires a separate team, a separate briefing, or a separate wait.